SEO Spider

How To Audit rel=”next” and rel=”prev” Pagination Attributes

How To Audit rel=”next” and rel=”prev” Using The SEO Spider

The rel=”next” and rel=”prev” pagination attributes are used to indicate the relationship between component URLs in a paginated series to search engines. They help consolidate indexing properties in the sequence, and direct users to the most relevant URL within the series.

They are commonly used on ecommerce category pages which display many products split across multiple URLs, articles that have been broken into shorter pieces, or forum threads as examples.

While they are a relatively simple concept, it’s extremely common for websites to implement pagination attributes incorrectly. Google announced on the 21st of March 2019 that they have not used rel=”next” and rel=”prev” in indexing for a long time, other search engines such as Bing (which also powers Yahoo), still use it as a hint for discovery and understanding site structure.

This tutorial walks you through how you can use the Screaming Frog SEO Spider to check rel=”next” and rel=”prev” pagination implementation quickly and efficiently. The SEO Spider will crawl pagination attributes, report upon their set-up and common errors.

To get started, you’ll need to download the SEO Spider, own a paid licence, and then follow these steps.

1) Select ‘Crawl’ & ‘Store’ Pagination under ‘Configuration > Spider’

The SEO Spider ‘Configuration’ is available in the top level menu.

By default ‘crawl’ is disabled, so enabling it will mean URLs referenced in rel=”next” and rel=”prev” attributes will be crawled, as well as extracted and reported. Next, click ‘OK’. This option will only make a difference to the crawl, if these pages are not already linked to with regular anchor tags.

2) Crawl The Website

Open up the SEO Spider, type or copy in the website you wish to crawl in the ‘Enter URL to spider’ box and hit ‘Start’.

The website will be crawled and rel=”next” and rel=”prev” attributes will be crawled.

Now grab a coffee and wait until the progress bar reaches 100%, and the crawl is completed.

3) View The Pagination Tab

The Pagination tab shows all URLs found in a crawl and will show any URLs referenced in rel=”next” and rel=”prev” attributes in individual columns in the main window pane.

The Pagination tab has 10 filters (as shown in the image below) that help you understand your pagination attribute implementation, and identify common pagination problems.

8 of the 10 filters are available to view immediately during, or at the end of a crawl. The ‘Unlinked Pagination URLs’ and ‘Pagination Loop’ filters require calculation at the end of the crawl via post ‘Crawl Analysis‘ for them to be populated with data (more on this in just a moment).

The right hand ‘overview’ pane, displays a ‘(Crawl Analysis Required)’ message against this filter that requires post crawl analysis to be populated with data.

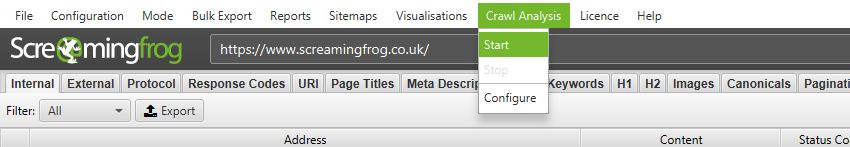

4) Click ‘Crawl Analysis > Start’ To Populate Pagination Filters

To populate the ‘Unlinked Pagination URLs’ and ‘Pagination Loop’ filters, you simply need to click a button to start crawl analysis.

However, if you have configured ‘Crawl Analysis’ previously, you may wish to double check, under ‘Crawl Analysis > Configure’ that ‘Pagination’ is ticked.

You can also untick other items that also require post crawl analysis to make this step quicker.

When crawl analysis has completed the ‘analysis’ progress bar will be at 100% and the filters will no longer have the ‘(Crawl Analysis Required)’ message.

5) Click ‘Pagination’ & View Populated Filters

After performing post crawl analysis, all pagination filters will now be populated with data where applicable.

The pagination data collected can then be reviewed in columns, to ensure the implementation is as required. You’re able to filter by the following –

- Contains Pagination – The URL has a rel=”next” and/or rel=”prev” attribute, indicating it’s part of a paginated series.

- First Page – The URL only has a rel=“next” attribute, indicating it’s the first page in a paginated series. It’s easy and useful to scroll through these URLs and ensure they are accurately implemented on the parent page in the series.

- Paginated 2+ Pages – The URL has a rel=“prev” on it, indicating it’s not the first page, but a paginated page in a series. Again, it’s useful to scroll through these URLs and ensure only paginated pages appear under this filter.

- Pagination URL Not In Anchor Tag – A URL contained in either, or both, the rel=”next” and rel=”prev” attributes of the page, are not found as a hyperlink in an HTML anchor element on the page itself. Paginated pages should be linked to with regular links to allow users to click and navigate to the next page in the series. They also allow Google to crawl from page to page, and PageRank to flow between pages in the series. Google’s own Webmaster Trends analyst John Mueller recommended proper HTML links for pagination as well in a Google Webmaster Central Hangout.

- Non-200 Pagination URL – The URLs contained in the rel=”next” and rel=”prev” attributes do not respond with a 200 ‘OK’ status code. This can include URLs blocked by robots.txt, no responses, 3XX (redirects), 4XX (client errors) or 5XX (server errors). Pagination URLs must be crawlable and indexable and therefore non-200 URLs are treated as errors, and ignored by the search engines. The non-200 pagination URLs can be exported in bulk via the ‘Reports > Pagination > Non-200 Pagination URLs’ export.

- Unlinked Pagination URL – The URL contained in the rel=”next” and rel=”prev” attributes are not linked to across the website. Pagination attributes may not pass PageRank like a traditional anchor element, so this might be a sign of a problem with internal linking, or the URLs contained in the pagination attribute. The unlinked pagination URLs can be exported in bulk via the ‘Reports > Pagination > Unlinked Pagination URLs’ export.

- Non-Indexable – The paginated URL is non-indexable. Generally they should all be indexable, unless there is a ‘view-all’ page set, or there are extra parameters on pagination URLs, and they require canonicalising to a single URL. One of the most common mistakes is canonicalising page 2+ paginated pages to the first page in a series. Google recommend against this implementation because the component pages don’t actually contain duplicate content. Another common mistake is using ‘noindex’, which can mean Google drops paginated URLs from the index completely and stops following outlinks from those pages, which can be a problem for the products on those pages. This filter will help identify these common set-up issues.

- Multiple Pagination URLs – There are multiple rel=”next” and rel=”prev” attributes on the page (when there shouldn’t be more than a single rel=”next” or rel=”prev” attribute). This may mean that they are ignored by the search engines.

- Pagination Loop – This will show URLs that have rel=”next” and rel=”prev” attributes that loop back to a previously encountered URL. Again, this might mean that the expressed pagination series are simply ignored by the search engines.

- Sequence Error – This shows URLs that have an error in the rel=”next” and rel=”prev” HTML link elements sequence. This check ensures that URLs contained within rel=”next” and rel=”prev” HTML link elements reciprocate and confirm their relationship in the series.

6) Use The ‘Reports > Pagination > X’ Exports To Bulk Export Source URLs & Errors

To bulk export details of source pages, that contain errors or issues for pagination, use the ‘Reports > Pagination’ options.

For example, the ‘Reports > Pagination > Unlinked Pagination URLs’ export, will include details of the source pages that contain the rel=”next” and rel=”prev” attributes that are not linked to across the site.

This can sometimes be easier to digest, than in the user interface, as source URLs and pagination links are included in individual rows.

Further Support

The guide above should help illustrate the simple steps required to test pagination implementation and issues across a website using the SEO Spider.

Please also read our Screaming Frog SEO Spider FAQs and full user guide for more information on the tool.

If you have any further queries, then just get in touch via support.