SEO Spider

How To Audit XML Sitemaps

How To Audit XML Sitemaps Using The SEO Spider

XML Sitemaps should be up to date, error free, and include indexable, canonical versions of URLs to help search engines crawl and index the URLs that are important for a website.

This tutorial walks you through how you can use the Screaming Frog SEO Spider to audit XML Sitemaps, either by crawling them as part of a site crawl, or uploading seperately and crawling the XML Sitemap URLs.

To get started, you’ll need to download the SEO Spider which is free in lite form, for crawling up to 500 URLs. You can download via the buttons in the right hand side bar. Crawling a sitemap as part of a crawl requires paid access, however you can upload and analyse an XML Sitemap in list mode using the free version.

The benefit of auditing an XML Sitemap as part of a site crawl, is that you can match the contents of a crawl against the XML Sitemap, to discover orphan URLs (URLs in the XML Sitemap, but not linked to internally on the site), or URLs found in a crawl, but are missing from the XML Sitemap.

Uploading an XML Sitemap seperately means you won’t have data on URLs that might be missing from a crawl, or orphan URLs. You can skip to the relevant section in this tutorial by clicking on your preference below –

Crawl A Site & Audit XML Sitemaps

This section of the guide shows how to set-up a crawl which integrates URLs from the XML Sitemap as well.

1) Select ‘Crawl Linked XML Sitemaps’ under ‘Configuration > Spider > Crawl’

You can choose to discover the XML Sitemaps via robots.txt (this requires a ‘Sitemap: https://www.example.com/sitemap.xml entry), or supply the destination of the XML Sitemap.

2) Crawl The Website

Open up the SEO Spider, type or copy in the website you wish to crawl in the ‘enter url to spider’ box and hit ‘Start’.

The website and XML Sitemaps will subsequently be crawled. Wait until the crawl finishes and reaches 100%.

3) View The Sitemaps Tab

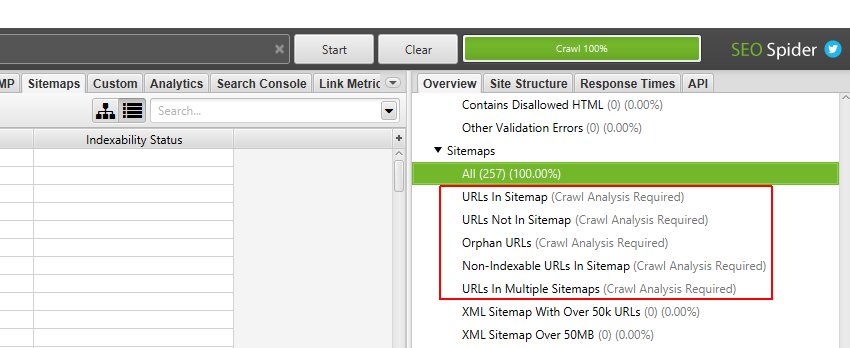

The Sitemaps tab has 7 filters in total that help group data by type, and identify common issues with XML Sitemaps.

Only two of the filters are available to view in real-time during a crawl. Five filters require calculation at the end of the crawl via post ‘Crawl Analysis‘ for them to be populated with data (more on this in just a moment).

The right hand ‘overview’ pane, displays a ‘(Crawl Analysis Required)’ message against filters that require post crawl analysis to be populated with data.

The SEO Spider will only know which URLs are missing from an XML Sitemap and vice versa, when the entire crawl completes.

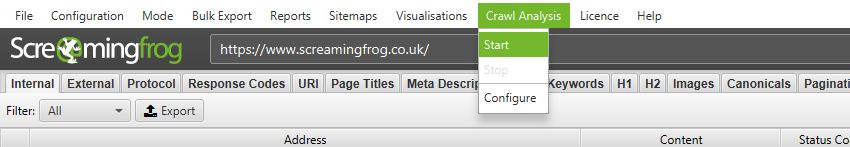

4) Click ‘Crawl Analysis > Start’ To Populate Sitemap Filters

To populate these five sitemap filters you simply need to click a button.

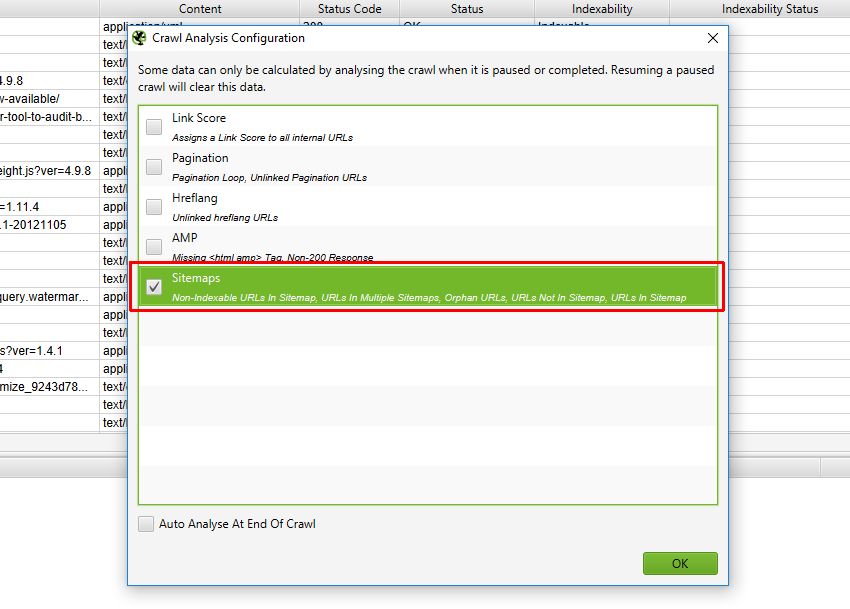

However, if you have configured ‘Crawl Analysis’ previously, you may wish to double check, under ‘Crawl Analysis > Configure’ that ‘Sitemaps’ is ticked.

You can also untick other items that also require post crawl analysis to make this step quicker.

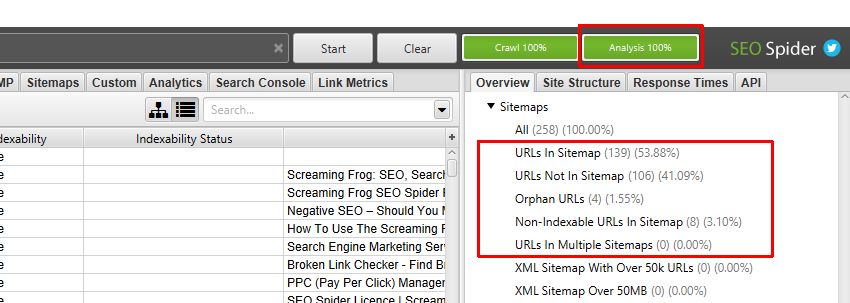

When crawl analysis has completed the ‘analysis’ progress bar will be at 100% and the filters will no longer have the ‘(Crawl Analysis Required)’ message.

You can now view the populated filters.

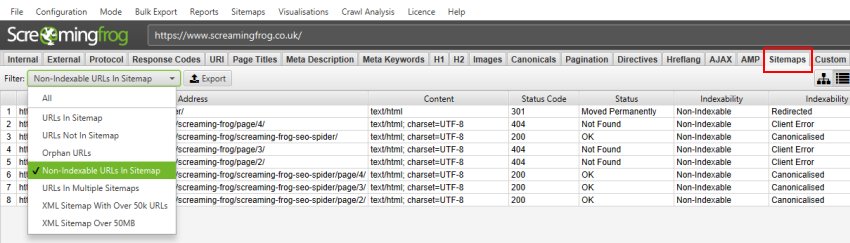

5) Click ‘Sitemaps’ & View the Filters

After performing post crawl analysis, all sitemap filters will now be populated with data where applicable.

You’re able to filter by the following –

- URLs In Sitemap – All URLs that are in an XML Sitemap. This should contain indexable and canonical versions of important URLs.

- URLs Not In Sitemap – URLs that are not in an XML Sitemap, but were discovered in the crawl. This might be on purpose (as they are not important), or they might be missing, and the XML Sitemap needs to be updated to include them. This filter does not consider non-indexable URLs, it assumes they are correctly non-indexable, and therefore shouldn’t be flagged to be included.

- Orphan URLs – URLs that are only in an XML Sitemap, but were not discovered during the crawl. Or, URLs that are only discovered from URLs in the XML Sitemap, but were not found in the crawl. These might be accidentally included in the XML Sitemap, or they might be pages that you wish to be indexed, and should really be linked to internally.

- Non-Indexable URLs in Sitemap – URLs that are in an XML Sitemap, but are non-indexable, and hence should be removed, or their indexability needs to be fixed.

- URLs In Multiple Sitemaps – URLs that are in more than one XML Sitemap. This isn’t necessarily a problem, but generally a URL only needs to be in a single XML Sitemap.

- XML Sitemap With Over 50k URLs – This shows any XML Sitemap that has more than the permitted 50k URLs.

- XML Sitemap With Over 50mb – This shows any XML Sitemap that is larger than the permitted 50mb file size.

The filters above will help you review that only your indexable, canonical URLs are included in the XML Sitemap.

Bing have little tolerance for ‘dirt’ in XML Sitemaps, such as those which contain errors, redirects or non-indexable URLs which can mean they place less trust in the XML Sitemap for crawling and indexing.

Google also recommend using XML Sitemaps to help with canonicalisation of URLs (defining the preferred version of URLs), so it’s important to keep them healthy, and provide clear and consistent signals.

Please see more information on XML Sitemaps at Sitemaps.org and Google Search Console help.

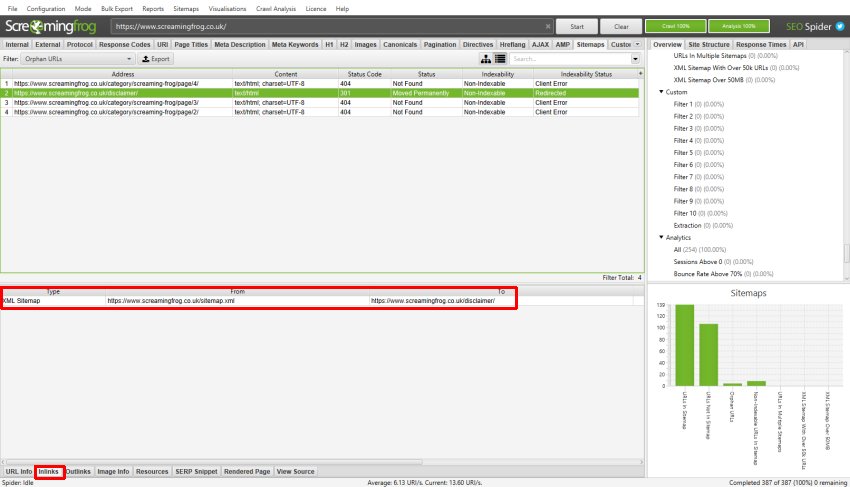

6) View The XML Sitemap Source By Clicking ‘Inlinks’

If you have multiple XML Sitemaps, you’ll want to know which of the XML Sitemaps contains a non-indexable URL, or orphan URL etc.

To do this, simply click on a URL in the top window pane and then click on the ‘Inlinks’ tab at the bottom to populate the lower window pane. The ‘XML Sitemap’ type, are references to a URL from an XML Sitemap.

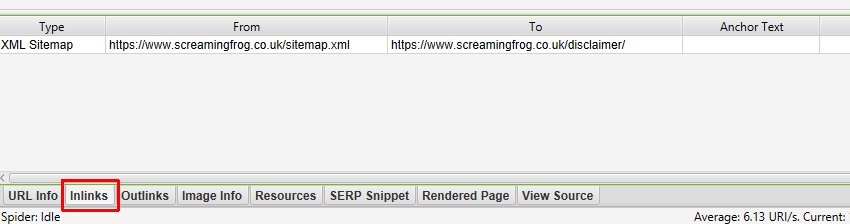

Here’s a close up view of the ‘inlinks’ lower window tab –

In this example, the /disclaimer/ is in the /sitemap.xml.

It redirects to our the /privacy/ page, which should be in there instead.

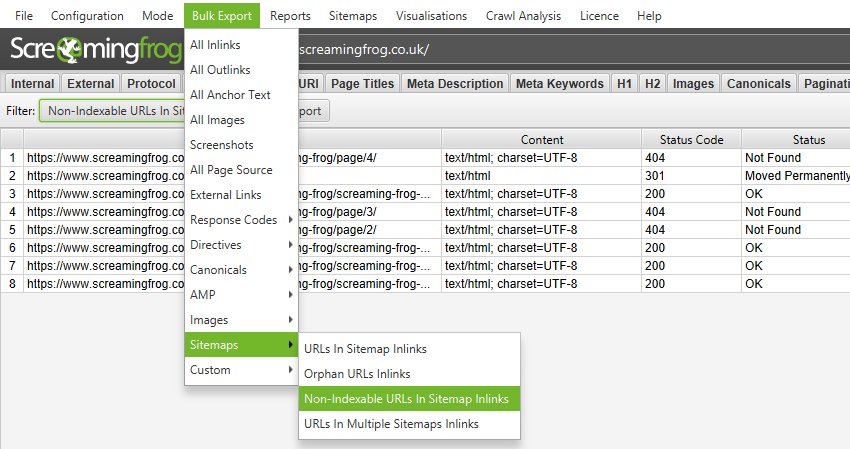

7) Use The ‘Bulk Export > Sitemaps > X Inlinks’ Exports

If you have multiple XML Sitmaps, this is an essential step, so you know which URLs relate to which XML Sitemaps.

To bulk export XML Sitemap inlink data, use the ‘Bulk Export > Sitemaps’ top level menu.

In the screenshot above, this would export all XML Sitemaps which have non-indexable URLs within them.

Upload & Audit XML Sitemaps Seperately

You can audit an XML Sitemap seperately (away from a site crawl), by uploading it in list mode. This process is outlined below.

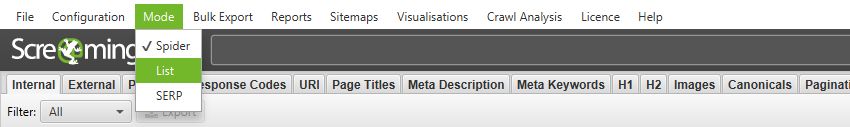

1) Click ‘Mode > List’

Via the top level menu. This enables you to upload a list of URLs, or download an XML Sitemap directly.

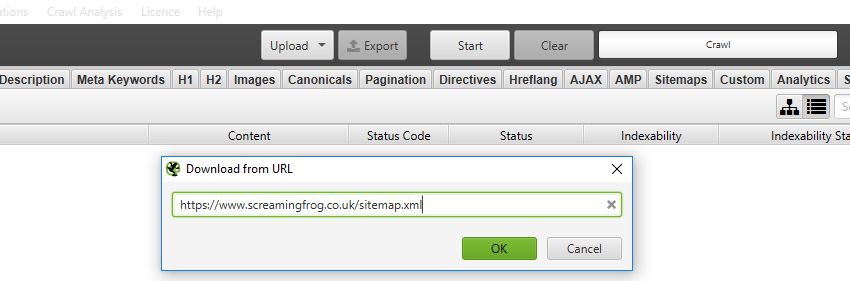

2) Choose to ‘Upload A File’ or ‘Download XML Sitemap’

If you have a saved XML Sitemap file, you may wish to upload the file, however, if it’s already live, you can simply choose to ‘Download XML Sitemap’ and input the URL.

If you have a Sitemap index file, which contains a number of XML Sitemaps, then choose ‘Download XML Sitemap’ to crawl them all in one go.

Click ‘OK’, and ‘OK’ again, to kick start the crawl.

3) Follow The Process Outlined from Point 3 In the Guide Above

Now you can follow the same process outlined from point 3 in the ‘Crawl A Site & Audit XML Sitemaps‘ section above. This includes running a crawl analysis at the end of a crawl to populate filters within the Sitemaps tab.

It’s worth remembering, that uploading an XML Sitemap via list mode won’t be as comprehensive, as the SEO Spider won’t have data on which of those can be found in the crawl.

This means the ‘URLs Not In Sitemap’ and ‘Orphan URLs’ filters will not be populated as this data isn’t known.

Further Support

The guide above should help illustrate the simple steps required to bulk audit XML Sitemaps using the SEO Spider.

Please also read our Screaming Frog SEO Spider FAQs and full user guide for more information on the tool.

If you have any further queries on the process outlined above, then just get in touch via support.