Green SEO: Leveraging Screaming Frog for a Sustainable Web

Mark Porter, Stu Davies & Will Barnes

Posted 16 April, 2026 by Mark Porter, Stu Davies & Will Barnes in Screaming Frog SEO Spider, SEO

Green SEO: Leveraging Screaming Frog for a Sustainable Web

As SEOs, we obsess over performance.

Speed. Crawl efficiency. Core Web Vitals. Conversions.

But we rarely talk about the environmental cost of what we ship.

The internet now accounts for roughly 4% of global greenhouse gas emissions – on par with the aviation industry. Since 2015, the average size of web pages has ballooned dramatically, increasing the energy required to serve them. And with the rapid rise of generative AI, energy demand across digital infrastructure is only accelerating.

This isn’t abstract. Every page view consumes energy. Every unnecessary asset, bloated script or redundant page increases the load – on servers, networks, devices and ultimately the planet.

This article was co-authored by GreenSEO co-founders Stu Davies and Will Barnes, alongside Mark Porter, Head of Marketing at Screaming Frog.

What is GreenSEO?

GreenSEO is about bringing sustainability into everyday SEO practice.

It’s not a new discipline. It’s not a badge. It’s a shift in mindset.

It means:

- Designing lighter, faster websites

- Reducing unnecessary crawl waste

- Questioning digital sprawl

- Measuring impact, not just rankings

If sustainable web designers have the Sustainable Web Manifesto, GreenSEO is the SEO industry’s equivalent call to action.

Why GreenSEO Matters Now

SEOs sit at the intersection of infrastructure, content and performance. We influence hosting decisions, crawl behaviour, page weight, media usage and technical architecture. That influence carries responsibility.

The good news? Much of what makes a site greener also makes it better: faster load times, cleaner architecture, reduced waste.

GreenSEO isn’t about perfection. It’s about awareness, measurement and incremental improvement.

And that starts with knowing where we stand.

GreenSEO & Screaming Frog’s Partnership

GreenSEO and Screaming Frog have joined forces to make a real impact on digital sustainability. This exciting partnership kicked off when GreenSEO talked to Screaming Frog about the possibility of releasing CO2 measurement in their crawler, the SEO Spider, a nifty tool aimed at measuring and cutting down the carbon footprint of websites.

Screaming Frog has been a fantastic ally, backing GreenSEO’s initiatives, including sponsoring the GreenSEO fringe events at the BrightonSEO international SEO conference.

Promoting Sustainable SEO Practices

The collaboration between GreenSEO and Screaming Frog is all about harnessing technology and data to promote sustainable SEO practices.

Together, we’re equipping SEOs with the essential tools and insights they need to measure, analyse, and lower the carbon emissions linked to their websites. This partnership truly highlights the strength of working together to foster a greener web.

About the Screaming Frog SEO Spider CO2 Capabilities

One of the most practical steps SEOs can take today is measuring what we’re actually emitting. The release of version 20.0 of the Screaming Frog SEO Spider saw the introduction of the carbon footprint and rating feature. While crawling a site, the SEO spider automatically calculates carbon emissions for each page using the CO2.js library.

At a very top-level, the CO2.js library takes an input of data in bytes, and estimates the carbon emissions produced to move that data across the internet.

Alongside the CO2 calculation there is a carbon rating for each URL crawled, using the Digital Carbon Rating Scale from Sustainable Web Design. This rating considers datacentres, network transfer and device usage in calculations.

Actionable Insights

The CO2 calculation and rating system can be used as a benchmark, as well as a catalyst to contribute to a more sustainable web, helping you to pinpoint high carbon rating pages and resources on your site.

Improving your carbon footprint often overlaps with performance optimisation — lighter pages are usually faster pages.

You can then consider optimisation opportunities to reduce file size and CO2 emissions, and improve carbon footprint and rating.

The CO2 calculation and carbon rating can even be integrated with analytics data to help prioritise which areas or URLs should be focused upon, for it makes sense to tackle pages with high traffic and high carbon rating over pages with low traffic and high carbon rating. Sustainability becomes strategic when it’s prioritised by impact, not just by URL.

What the SEO Spider Can Help With

Baseline Your Current Position

The first step in cutting down your website’s carbon footprint is to measure where you currently stand. The Screaming Frog SEO Spider gives you a clear picture of your website’s energy use and carbon emissions. This information is essential for crafting a sustainability plan and keeping track of your progress over time.

Did you know that, on average, a web page generates about 0.4 grams of CO2 with each view? By assessing your site’s carbon footprint, you can pinpoint areas that need improvement and establish goals for reduction.

Create a Roadmap / Get Buy-In

Armed with these insights, you can develop a thorough sustainability roadmap. This plan will detail the steps necessary to lower your website’s carbon footprint while also rallying support from stakeholders. By showcasing compelling data and setting clear objectives, you can win over key decision-makers and inspire real change.

Take, for example, Wholegrain Digital, which managed to cut Ecovers’ website’s carbon emissions by 60% by following a digital sustainability roadmap. Their strategy involved optimising images, minimising server requests, and switching to a green web host.

Measure the Impact of Sustainable Practices

The SEO Spider turns sustainability from theory into measurable action.

Infrastructure

Start with the foundations – where your site lives and how it’s accessed.

- Switch to Green Web Hosts: Hosting powered by renewable energy can dramatically reduce operational emissions overnight by up to 90%1.

- Optimise Crawl Budgets: Smarter crawl management reduces unnecessary server strain and digital waste.

Performance

Next, focus on weight and efficiency – lighter pages mean lower energy use.

- Optimise Media Files: Compress images/videos to cut energy use significantly.

- Improve Core Web Vitals: Faster pages use up to 20% less energy.

- Remove Redundant Content: Faster load times (up to 50% improvement2) reduce energy consumption.

Experience

Finally, look at how design and UX influence consumption at the device level.

- Implement Dark Mode: Save up to 39%3 energy on OLED screens.

- Apply Eco Design Practices: Optimise fonts/design to reduce rendering energy.

- Enhance UX Flow: Better journeys cut bounce rates and energy use.

Not every action will move the needle equally – combining emissions data with traffic data helps prioritise changes that deliver the biggest real-world impact.

How to Carbon Audit a Website Using the SEO Spider

If we’re serious about GreenSEO, measurement can’t be superficial. We need to crawl the site as users experience it.

The most important step in reducing a site’s carbon output is to measure and benchmark its current state and CO2 output. This is where the Screaming Frog SEO Spider comes in. With the right settings, the crawler allows SEOs to measure the impact of an entire site.

Ben Meyer, who spoke at a previous GreenSEO event, has done a deep dive on running carbon audits with Screaming Frog and even has a Looker Studio dashboard you can plug your data into.

How to Save Energy When Using the SEO Spider

GreenSEO isn’t just about what we audit, it’s about how we audit. As well as taking proactive steps to reduce your carbon footprint by addressing high carbon pages and resources on your site, you can also crawl more responsibly when using the Screaming Frog SEO Spider.

Crawling Responsibly

Firstly, consider whether you actually need to crawl the entire site. Often it’s just not required. Generally websites are templated, and a sample crawl of page types from across various sections, will be enough to make informed decisions across the wider site.

Why crawl 5m URLs, when 50k is enough? With a few simple adjustments, you can avoid wasting resources and time on these.

As well as this, it’s often best to use JavaScript crawling selectively.

JavaScript crawling is slower and more intensive, as all resources (whether JavaScript, CSS and images) need to be fetched to render each web page in a headless browser in the background to construct the DOM.

While this isn’t an issue for smaller websites, for a large site with many thousands or more pages, this can make a big difference. If your site doesn’t rely on JavaScript to dynamically manipulate a web page significantly, then there’s no need to waste time and resource.

Other resource intensive features include things like spelling and grammar checks and AI integrations. Essentially, you always need to ask yourself if you require a feature for the task at hand, and select accordingly.

Adjust Default Crawl Configuration

The more data that’s collected and the more that’s crawled, the more intensive it will be. There are various ways to carry out a ‘lighter’ crawl, such as unselecting resources you don’t need under ‘Configuration > Spider > Crawl’:

Please note: if you’re crawling in JavaScript rendering mode, you’ll likely need most of these options enabled, otherwise it will impact the render.

Deselecting any of the following page links under ‘Configuration > Spider > Crawl’ will also help save resources:

- Crawl & Store External Links

- Crawl & Store Canonicals

- Crawl & Store Pagination

- Crawl & Store Hreflang

- Crawl & Store AMP

You can deselect non-essential attributes from being stored under ‘Configuration > Spider > Extraction’ to help save memory, such as meta keywords. Or just items you’re not auditing, such as structured data.

We also recommend disabling the custom link positions feature via ‘Config > Custom > Link Positions’. This uses up more memory, by storing the XPath of every link found in a crawl to classify its position (navigation, sidebar, footer etc).

You can find further config refinements within our tutorial on crawling large sites.

Exclude Unnecessary URLS

There are various ways to narrow the crawl to avoid processing unnecessary URLs. The most obvious one is the exclude feature, allowing you to exclude entire sections, filters, URL parameters, subfolders and more.

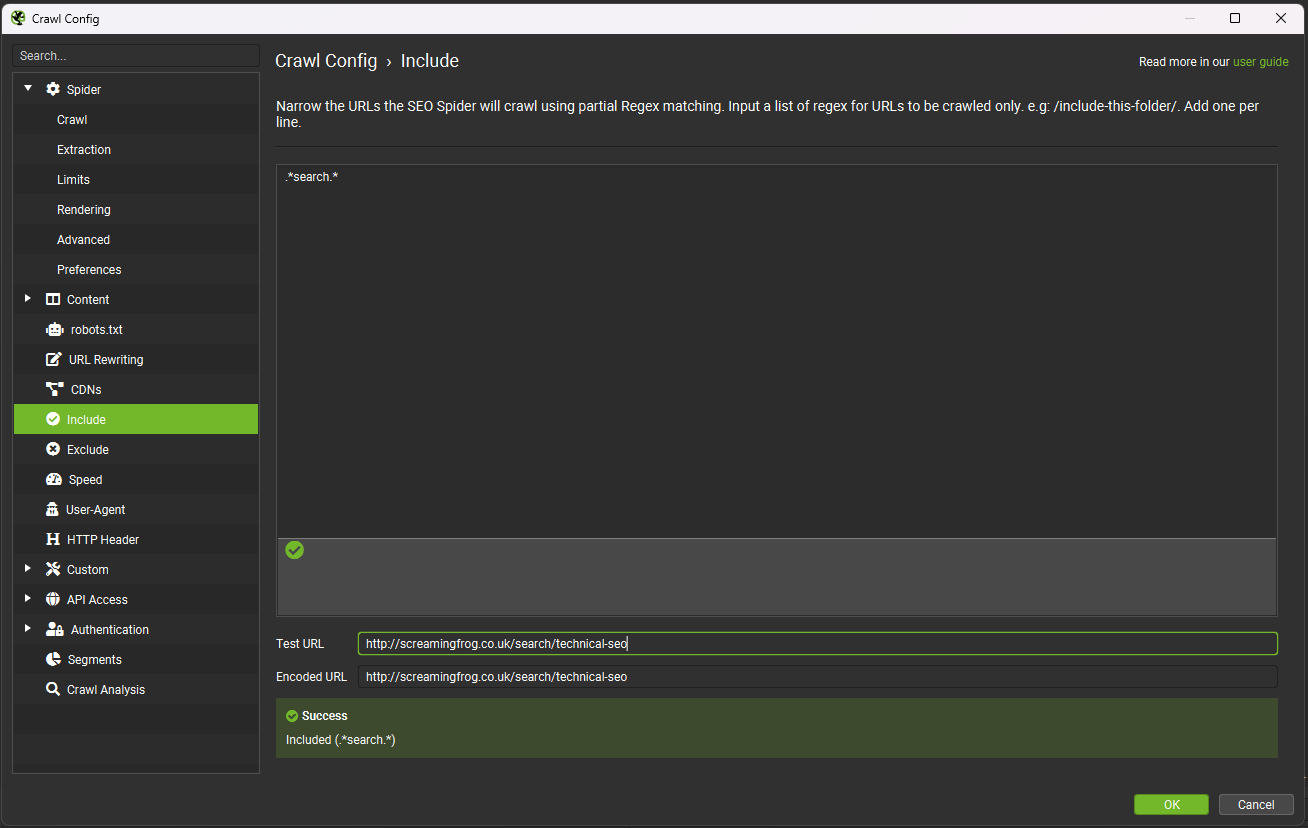

On the flipside, you can use the include feature to control which URL path the SEO Spider will crawl. As an example, if you wanted to crawl pages from https://www.screamingfrog.co.uk which have ‘search’ in the URL string you would simply include the regex:.*search.* in the ‘include’ feature.

This would find the /search-engine-marketing/ and /search-engine-optimisation/ pages as they both have ‘search’ in them.

Lastly, there are various limits available that allow you to get a sample of pages from across the site, without crawling everything. These include:

- Limit Crawl Total – Limit the total number of pages crawled overall. Browse the site, to get a rough estimate of how many might be required to crawl a broad selection of templates and page types.

- Limit Crawl Depth – Limit the depth of the crawl to key pages, allowing enough depth to get a sample of all templates.

- Limit Max URI Length To Crawl – Avoid crawling incorrect relative linking or very deep URLs, by limiting by length of the URL string.

- Limit Max Folder Depth – Limit the crawl by folder depth, which can be more useful for sites with intuitive structures.

- Limit Number of Query Strings – Limit crawling lots of facets and parameters by number of query strings. By setting the query string limit to ‘1’, you allow the SEO Spider to crawl a URL with a single parameter (?=colour for example), but not anymore. This can be helpful when various parameters can be appended to URLs in different combinations!

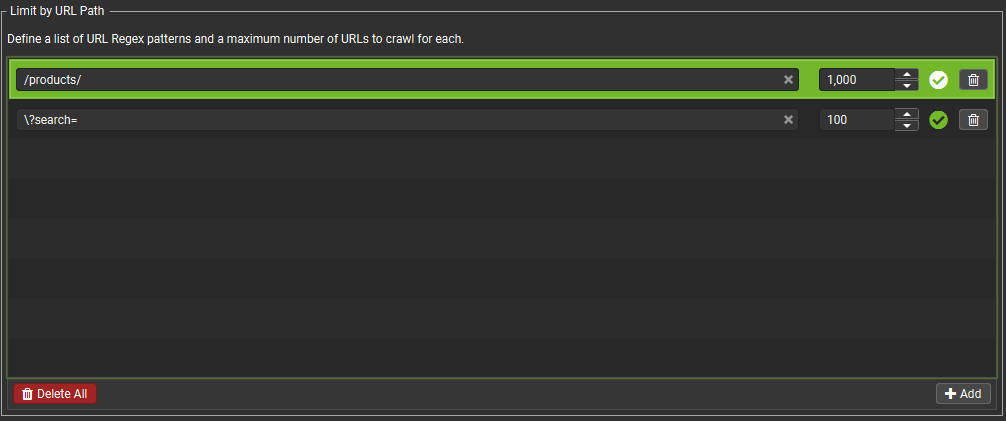

One of the newer limits is ‘Limit by URL Path’, making it possible to limit the total number of URLs that match a specific regular expression. For example, using ‘/products/’ in the ‘Configuration > Spider > Limits > Limit by URL Path’, limits the total number of URLs containing ‘/products/’ the Spider will crawl.

By default this is set to 1,000 but can be adjusted. It is possible to set multiple rules in this section with different limits.

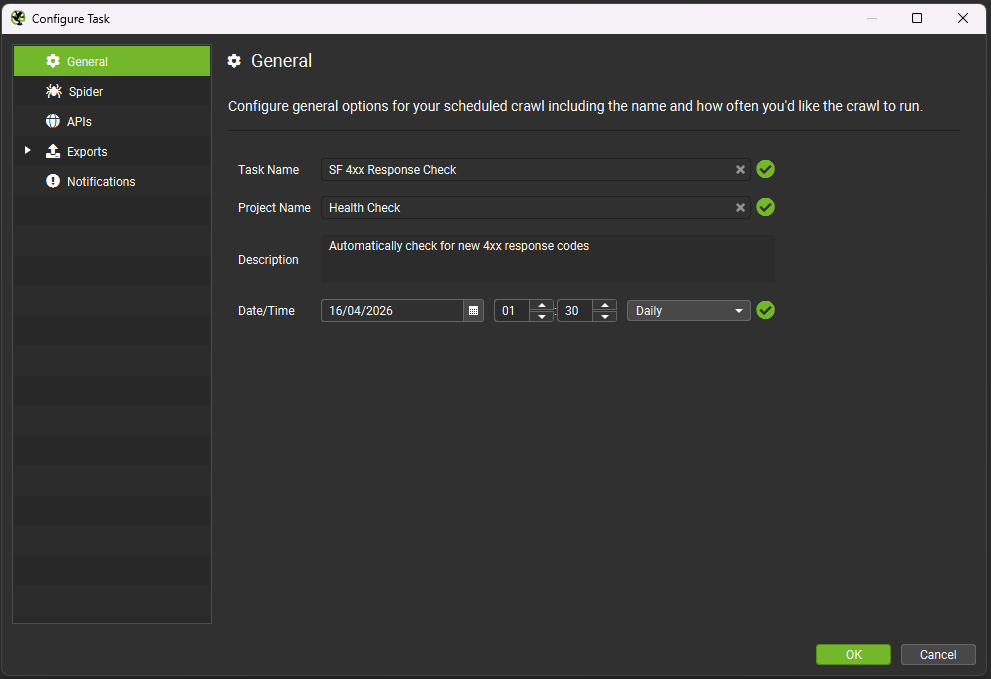

Schedule Crawls To Run During Low Peak Energy Period

You’re able to schedule crawls to run automatically within the SEO Spider, as a one-off, or at chosen intervals. This feature can be found under ‘File > Scheduling’ within the app.

You’re able to preselect the mode (spider, or list), saved configuration and more.

This allows you to schedule crawls to run overnight, when energy is in lower demand and power grids often use more renewable energy and less fossil fuel.

Conclusion

Sustainable SEO isn’t a separate discipline.

It’s simply good optimisation – done with awareness.

As SEOs, we influence hosting choices, site architecture, crawl behaviour, performance budgets and content sprawl. That influence carries responsibility. The good news is that much of what makes a site greener also makes it better: faster, leaner, more efficient.

The introduction of carbon measurement within the Screaming Frog SEO Spider gives the industry something we’ve lacked for years – visibility. Once you can measure something, you can prioritise it. Once you can prioritise it, you can improve it.

GreenSEO isn’t about perfection. It’s about progress. It’s about making one better decision at a time – informed by data, not guesswork.

If this is a conversation you’d like to continue, we’ll be discussing practical GreenSEO approaches, case studies and tools at the next GreenSEO fringe event at BrightonSEO.

Because the future of SEO isn’t just about ranking.

It’s about responsibility.

0 Comments